Contemporary research on consciousness is ambiguous, like the double-faced god Janus. On the one hand, it has achieved impressive practical results. We can today detect conscious activity in the brain for a number of purposes, including better therapeutic approaches to people affected by disorders of consciousness such as coma, vegetative state and minimally conscious state. On the other hand, the field is marked by a deep controversy about methodology and basic definitions. As a result, we still lack an overarching theory of consciousness, that is to say, a theoretical account that scholars agree upon.

Developing a common theoretical framework is recognized as increasingly crucial to understanding consciousness and assessing related issues, such as emerging ethical issues. The challenge is to find a common ground among the various experimental and theoretical approaches. A strong candidate that is achieving increasing consensus is the notion of complexity. The basic idea is that consciousness can be explained as a particular kind of neural information processing. The idea of associating consciousness with complexity was originally suggested by Giulio Tononi and Gerald Edelman in a 1998 paper titled Consciousness and Complexity. Since then, several papers have been exploring its potential as the key for a common understanding of consciousness.

Despite the increasing popularity of the notion, there are some theoretical challenges that need to be faced, particularly concerning the supposed explanatory role of complexity. These challenges are not only philosophically relevant. They might also affect the scientific reliability of complexity and the legitimacy of invoking this concept in the interpretation of emerging data and in the elaboration of scientific explanations. In addition, the theoretical challenges have a direct ethical impact, because an unreliable conceptual assumption may lead to misplaced ethical choices. For example, we might wrongly assume that a patient with low complexity is not conscious, or vice-versa, eventually making medical decisions that are inappropriate to the actual clinical condition.

The claimed explanatory power of complexity is challenged in two main ways: semantically and logically. Let us take a quick look at both.

Semantic challenges arise from the fact that complexity is such a general and open-ended concept. It lacks a shared definition among different people and different disciplines. This open-ended generality and lack of definition can be a barrier to a common scientific use of the term, which may impact its explanatory value in relation to consciousness. In the landmark paper by Tononi and Edelman, complexity is defined as the sum of integration (conscious experience is unified) and differentiation (we can experience a large number of different states). It is important to recognise that this technical definition of complexity refers only to the stateof consciousness, not to its contents. This means that complexity-related measures can give us relevant information about the levelof consciousness, yet they remain silent about the corresponding contentsandtheirphenomenology. This is an ethically salient point, since the dimensions of consciousness that appear most relevant to making ethical decisions are those related to subjective positive and negative experiences. For instance, while it is generally considered as ethically neutral how we treat a machine, it is considered ethically wrong to cause negative experiences to other humans or to animals.

Logical challenges arise about the justification for referring to complexity in explaining consciousness. This justification usually takes one of two alternative forms. The justification is either bottom-up (from data to theory) or top-down (from phenomenology to physical structure). Both raise specific issues.

Bottom-up: Starting from empirical data indicating that particular brain structures or functions correlate to particular conscious states, relevant theoretical conclusions are inferred. More specifically, since the brains of subjects that are manifestly conscious exhibit complex patterns (integrated and differentiated patterns), we are supposed to be justified to infer that complexity indexes consciousness. This conclusion is a sound inference to the best explanation, but the fact that a conscious state correlates with a complex brain pattern in healthy subjects does not justify its generalisation to all possible conditions (for example, disorders of consciousness), and it does not logically imply that complexity is a necessary and/or sufficient condition for consciousness.

Top-down: Starting from certain characteristics of personal experience, we are supposed to be justified to infer corresponding characteristics of the underlying physical brain structure. More specifically, if some conscious experience is complex in the technical sense of being both integrated and differentiated, we are supposed to be justified to infer that the correlated brain structures must be complex in the same technical sense. This conclusion does not seem logically justified unless we start from the assumption that consciousness and corresponding physical brain structures must be similarly structured. Otherwise it is logically possible that conscious experience is complex while the corresponding brain structure is not, and vice versa. In other words, it does not appear justified to infer that since our conscious experience is integrated and differentiated, the corresponding brain structure must be integrated and differentiated. This is a possibility, but not a necessity.

The abovementioned theoretical challenges do not deny the practical utility of complexity as a relevant measure in specific clinical contexts, for example, to quantify residual consciousness in patients with disorders of consciousness. What is at stake is the explanatory status of the notion. Even if we question complexity as a key factor in explaining consciousness, we can still acknowledge that complexity is practically relevant and useful, for example, in the clinic. In other words, while complexity as an explanatory category raises serious conceptual challenges that remain to be faced, complexity represents at the practical level one of the most promising tools that we have to date for improving the detection of consciousness and for implementing effective therapeutic strategies.

I assume that Giulio Tononi and Gerald Edelman were hoping that their theory about the connection between consciousness and complexity finally would erase the embarrassing ambiguity of consciousness research, but the deep theoretical challenges suggest that we have to live with the resemblance to the double-faced god Janus for a while longer.

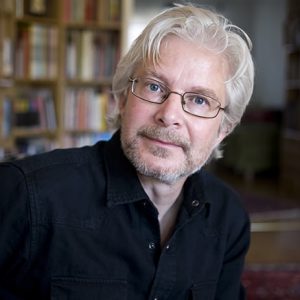

Written by…

Michele Farisco, Postdoc Researcher at Centre for Research Ethics & Bioethics, working in the EU Flagship Human Brain Project.

Tononi, G. and G. M. Edelman. 1998. Consciousness and complexity. Science 282(5395): 1846-1851.

We like critical thinking